Why This Became My Next Experiment

In my previous AI experiment, I tried cold start SFT on a base model just to get my hands dirty with one part of the post training pipeline. After that I kept reading more and more about RL, and this part honestly felt the most magical to me. RL is basically training a model by giving it reward signals on its outputs, so that over time it learns to produce better outputs through trial and feedback.

For a first project, RLVR felt like the cleanest place to start. RLVR means RL from verifiable rewards. Instead of asking humans whether an answer is good or bad, you use problems where correctness can be checked automatically. Math is the classic example. If the answer is right, reward it. If it is wrong, do not. That is why this felt like the best starter project for me. The reward is automatic. The verification is automatic. And I could focus on understanding the RL loop itself.

Why GRPO And Why TRL

The algorithm I picked was GRPO, Group Relative Policy Optimization, the one people often associate with DeepSeek style RL. The thing that made GRPO click for me was that it does not need a separate critic model. For the same prompt, the model generates a group of responses, those responses are compared relative to each other, and that relative ranking becomes the learning signal. That makes it much lighter than older RL setups where you also have to train or maintain another model just to score outputs.

For the framework, I used the TRL library by Hugging Face, specifically the GRPOTrainer class. This was nice because the overall workflow felt close to the kind of training setup I had already touched in SFT, except now inference and training were happening together in the same loop. And that is also exactly why RL is more expensive than SFT. In SFT, the model sees an example, computes loss, updates, and moves on. In RL, for every step, the model has to generate multiple responses, score them, compare them, and only then update. So you are doing generation plus training together. Very quickly this becomes expensive if you are a GPU poor person surviving on cloud credits.

Model, Dataset, And The Basic Idea

For the model I picked Qwen2.5 1.5B Instruct. For the dataset I picked GSM8K, which is probably the most obvious beginner friendly RLVR dataset because the task is simple to verify.

The setup was:

- Take a small instruct model from Hugging Face

- Give it GSM8K math problems

- Make it generate multiple rollouts for each prompt

- Parse the final numeric answer, basically trying to extract the last answer number from the output

- Compare that answer against the gold answer

- Give reward

1.0 if it matches, 0.0 if it does not

- Let GRPO update the model toward higher reward rollouts

At a high level, the loop was simple.

GSM8K question

↓

Formatted prompt

↓

4 rollouts are generated from the model

↓

Reward parser extracts the final answer

↓

Parsed answer is compared with the gold answer

↓

GRPO ranks the rollouts relative to each other

↓

Model weights are updated

That simplicity is what made this project a very good first step into RL for me.

Training Run On Modal

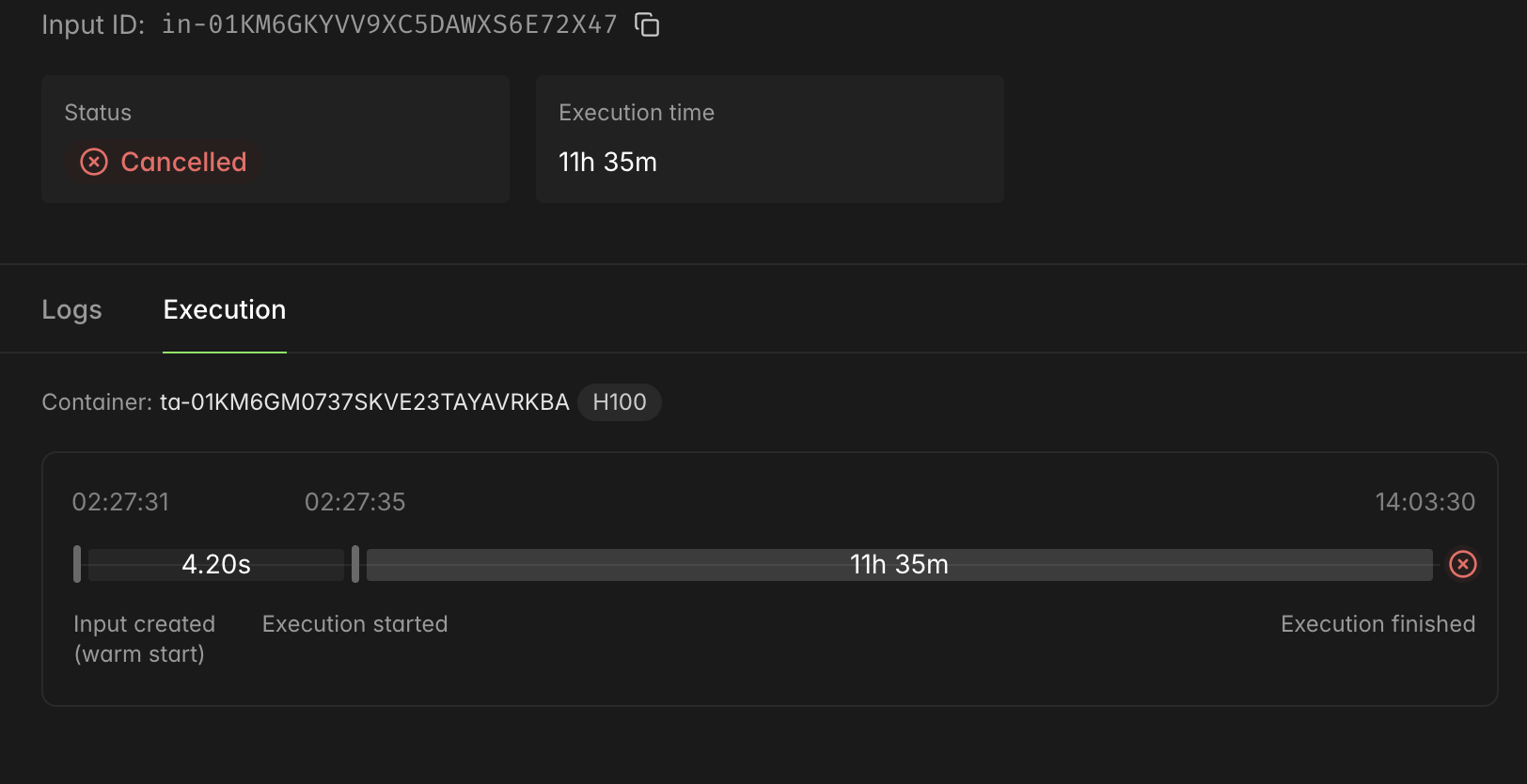

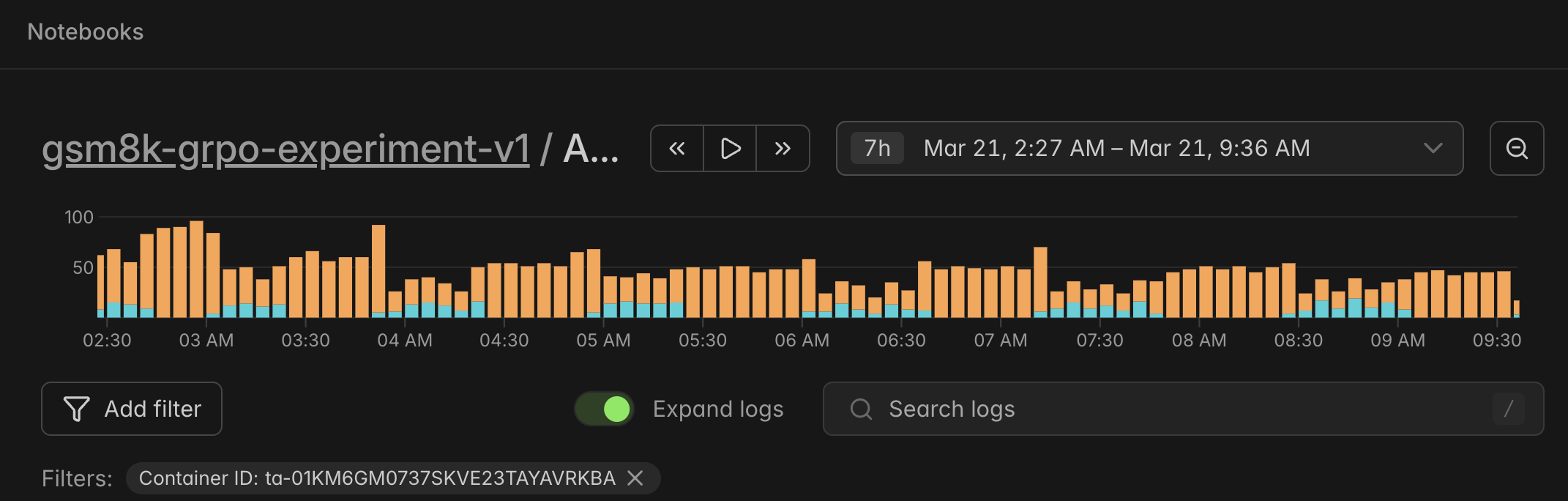

I ran the experiment on Modal using an NVIDIA H100. One practical constraint on my side was the credits limit. I had set my Modal budget to around $45, which is why I did not use the full 7k plus rows of GSM8K. I used 5000 rows instead. I also ran the command in detached mode and let the job keep going while my Mac slept and I slept too. I started the run at around 2:30 AM, and by around 9:30 AM the container had stopped because the credits were exhausted.

The logs view made that timing feel even more real to me. You can literally see the run starting in the middle of the night and then flattening out around morning when the credits ran out.

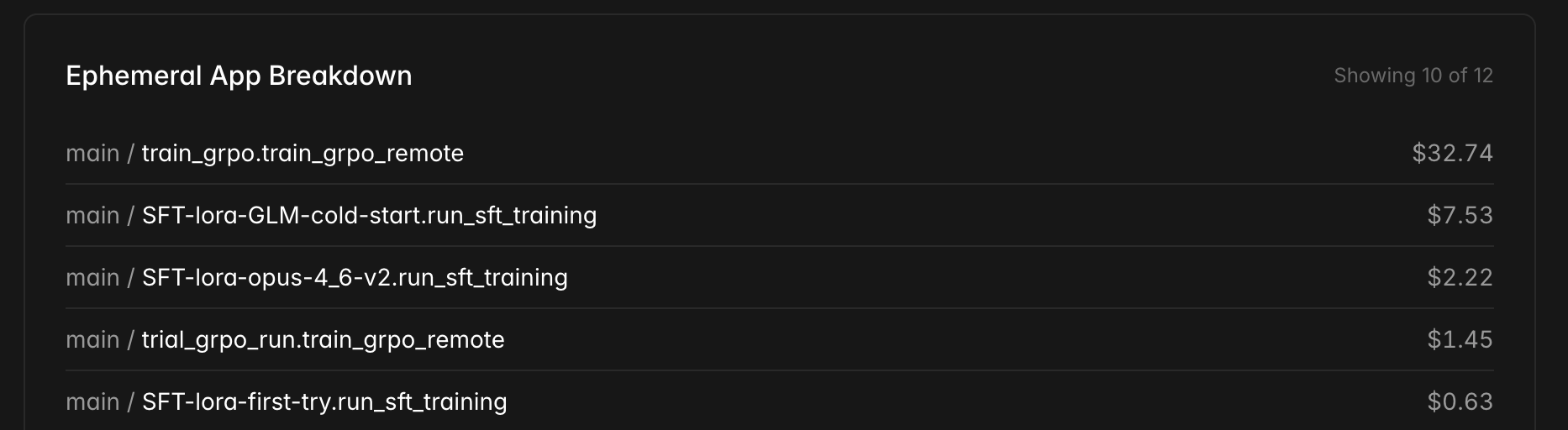

The cost breakdown made the difference between SFT and RL feel very real to me. The main GRPO run alone cost about $32.74, while one of my earlier cold start SFT runs was about $7.53 and another one was about $2.22. So the most expensive line item here was also the run that only made it through about 56% of one epoch and had already started drifting into reward failure. That was painful, but it also made the lesson very obvious. In RL, a weak reward function can waste real money very quickly.

At first I thought the main story here was simply that the run got cut off because I ran out of credits. After going through the logs and graphs, I think that was not actually the important part. The interesting part was that the useful learning had already more or less stopped before the credits cut me off.

Step Math That Cleared My Confusion

The step math took me a while to understand properly, so I want to write it down clearly here.

In my run:

max_train_samples = 5000per_device_train_batch_size = 1gradient_accumulation_steps = 4- effective batch size =

1 x 4 = 4 prompts per optimizer update

num_generations = 4, meaning each prompt gets 4 rollouts

So one optimizer step was scoring:

4 prompts4 rollouts per prompt16 total completions

And total planned steps for one epoch were:

total_steps = max_train_samples / effective_batch_size

total_steps = 5000 / 4

total_steps = 1250

So one full epoch meant 1250 optimizer updates over 5000 prompts, while generating 20000 sampled completions in total. The logs made it look like the run stopped around step 560, but the plots go till around 700, so I am treating it as roughly 700 steps completed. That means I got through about 56% of one epoch.

What The Curves Told Me

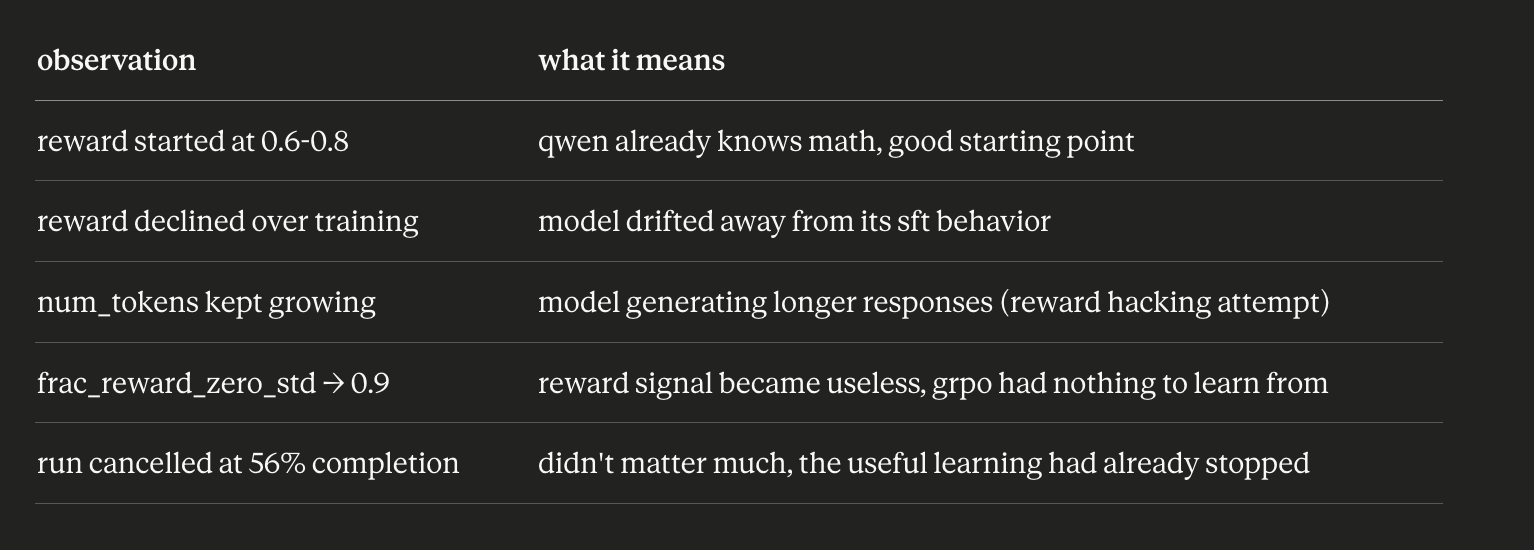

The reward curves were actually the most educational part of this whole run. Phase 1, roughly steps 0 to 200, started surprisingly strong. The reward was already around 0.6 to 0.8. That told me the model was not starting from zero at all. Qwen2.5 1.5B Instruct already knows a fair bit of GSM8K level math from its own instruction tuning, so the baseline was already decent. Also, reward standard deviation was nonzero in the beginning, which meant different rollouts were getting different scores. GRPO still had useful signal to learn from.

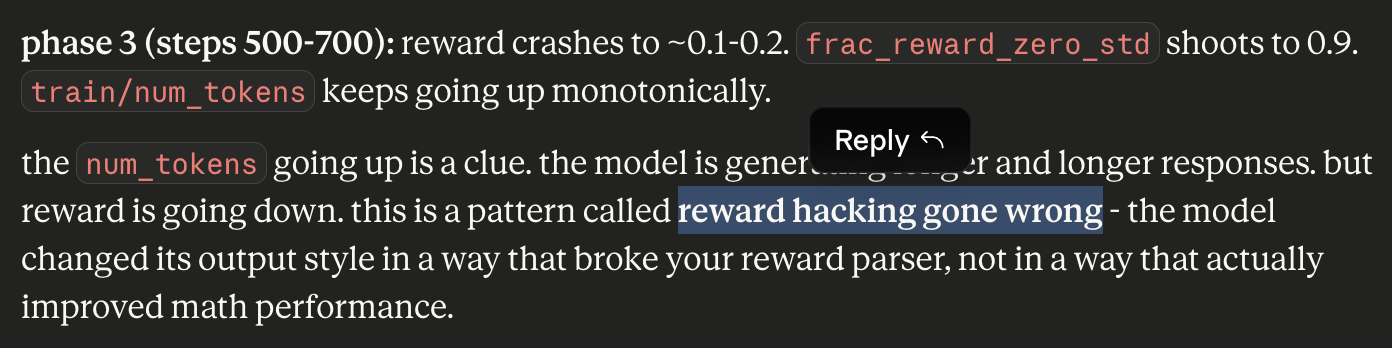

Then in phase 2, roughly steps 200 to 500, reward started drifting down. The run was still moving, but it was not moving in a healthy direction. Then phase 3, roughly steps 500 to 700, is where it got really interesting. Reward crashed to around 0.1 to 0.2. frac_reward_zero_std shot up toward 0.9. train/num_tokens kept going up monotonically. That combination is what made me realize the failure mode was not simply "the model is bad at math." The model was generating longer and longer responses, but reward was getting worse. That is a classic clue that something in the reward setup is going wrong.

What Actually Went Wrong

My current reading of the run is that three things went wrong, and all three were design problems, not bugs. First, the reward signal was too sparse. In v1, if the model got the math wrong, reward was just 0.0, full stop. That means GRPO could not distinguish between "wrong but formatted properly" and "completely broken output." Both collapsed to the same score.

Second, the model found a way to escape the reward parser by changing its output style. As the run went on, num_tokens kept growing. The model was getting more verbose, and my parser that was trying to extract the final number from the tail of the response could no longer keep up reliably with that change in style. Third, my system prompt was pushing a 1.5B non thinking model to reason carefully and at length, which likely encouraged longer outputs even more.

So the more accurate meme here is not "the model could not reward hack."

It is this:

my model was already too good for my reward function to handle

Because honestly, the run started at a reward range that already suggests the model had real capability on these problems. The reward fell later not necessarily because the model became dumb at math, but because the reward parser and the output style drifted out of sync.

Why The Cancelled Run Was Not The Main Problem

The container being cancelled at about 56% completion sounds dramatic, but I do not think that is the core issue. By the time the credits ran out, reward had already fallen badly, frac_reward_zero_std was already near collapse territory, and the useful gradient was already fading. So yes, the run got cut off. But if I am being honest, I do not think the extra time would have saved this version of the setup. The useful learning had already slowed down a lot.

What I Want To Change In V2

For v2, I want to move to a two reward setup. Reward 1 will be format reward. Did the response end with ANSWER: {number}? If yes, give a small reward like 0.1 or 0.2. Reward 2 will be correctness reward. Did the number in ANSWER: {number} match the gold answer? If yes, give 1.0. So total reward becomes:

total_reward = format_reward + correctness_reward

This is better because even if the model gets the math wrong, it can still get partial credit for staying inside the output format. That means the reward signal stays informative for longer. Instead of everything collapsing to zero, GRPO can still tell the difference between:

- A wrong answer with decent structure

- A wrong answer with completely messy output

- A correct answer with the expected structure

And that is the main point of reward shaping here. I am not changing what correctness means. I am just giving the model intermediate signals so the gradient does not die so easily.

What This Project Taught Me

This was my first ever RL experiment while studying RL, and it was honestly the perfect kind of failure. It was not a boring failure where nothing happens. It was a failure that told me something real about the setup.

It told me:

- RLVR is a very nice beginner entry point because verification can be fully automatic

- GRPO with TRL is accessible enough that a small independent experiment is very doable

- Small models can absolutely collapse under RL if the learning setup is not careful

- Reward design matters just as much as model choice

- A run stopping early due to credits can hide the more important story in the curves

I also think this experiment made one thing clear to me. In SFT, a bad formatting decision can hurt you. In RL, a weak reward function can quietly destroy the whole point of the run. And I guess that is why people in this field obsess so much over reward design.

Links

Experiments

Infra And Libraries

Datasets

Why This Became My Next Experiment

In my previous AI experiment, I tried cold start SFT on a base model just to get my hands dirty with one part of the post training pipeline. After that I kept reading more and more about RL, and this part honestly felt the most magical to me. RL is basically training a model by giving it reward signals on its outputs, so that over time it learns to produce better outputs through trial and feedback.

For a first project, RLVR felt like the cleanest place to start. RLVR means RL from verifiable rewards. Instead of asking humans whether an answer is good or bad, you use problems where correctness can be checked automatically. Math is the classic example. If the answer is right, reward it. If it is wrong, do not. That is why this felt like the best starter project for me. The reward is automatic. The verification is automatic. And I could focus on understanding the RL loop itself.

Why GRPO And Why TRL

The algorithm I picked was GRPO, Group Relative Policy Optimization, the one people often associate with DeepSeek style RL. The thing that made GRPO click for me was that it does not need a separate critic model. For the same prompt, the model generates a group of responses, those responses are compared relative to each other, and that relative ranking becomes the learning signal. That makes it much lighter than older RL setups where you also have to train or maintain another model just to score outputs.

For the framework, I used the TRL library by Hugging Face, specifically the

GRPOTrainerclass. This was nice because the overall workflow felt close to the kind of training setup I had already touched in SFT, except now inference and training were happening together in the same loop. And that is also exactly why RL is more expensive than SFT. In SFT, the model sees an example, computes loss, updates, and moves on. In RL, for every step, the model has to generate multiple responses, score them, compare them, and only then update. So you are doing generation plus training together. Very quickly this becomes expensive if you are a GPU poor person surviving on cloud credits.Model, Dataset, And The Basic Idea

For the model I picked Qwen2.5 1.5B Instruct. For the dataset I picked GSM8K, which is probably the most obvious beginner friendly RLVR dataset because the task is simple to verify.

The setup was:

1.0if it matches,0.0if it does notAt a high level, the loop was simple.

That simplicity is what made this project a very good first step into RL for me.

Training Run On Modal

I ran the experiment on Modal using an NVIDIA H100. One practical constraint on my side was the credits limit. I had set my Modal budget to around

$45, which is why I did not use the full 7k plus rows of GSM8K. I used5000rows instead. I also ran the command in detached mode and let the job keep going while my Mac slept and I slept too. I started the run at around2:30 AM, and by around9:30 AMthe container had stopped because the credits were exhausted.The logs view made that timing feel even more real to me. You can literally see the run starting in the middle of the night and then flattening out around morning when the credits ran out.

The cost breakdown made the difference between SFT and RL feel very real to me. The main GRPO run alone cost about

$32.74, while one of my earlier cold start SFT runs was about$7.53and another one was about$2.22. So the most expensive line item here was also the run that only made it through about56%of one epoch and had already started drifting into reward failure. That was painful, but it also made the lesson very obvious. In RL, a weak reward function can waste real money very quickly.At first I thought the main story here was simply that the run got cut off because I ran out of credits. After going through the logs and graphs, I think that was not actually the important part. The interesting part was that the useful learning had already more or less stopped before the credits cut me off.

Step Math That Cleared My Confusion

The step math took me a while to understand properly, so I want to write it down clearly here.

In my run:

max_train_samples = 5000per_device_train_batch_size = 1gradient_accumulation_steps = 41 x 4 = 4prompts per optimizer updatenum_generations = 4, meaning each prompt gets4rolloutsSo one optimizer step was scoring:

4prompts4rollouts per prompt16total completionsAnd total planned steps for one epoch were:

So one full epoch meant

1250optimizer updates over5000prompts, while generating20000sampled completions in total. The logs made it look like the run stopped around step560, but the plots go till around700, so I am treating it as roughly700steps completed. That means I got through about56%of one epoch.What The Curves Told Me

The reward curves were actually the most educational part of this whole run. Phase 1, roughly steps

0to200, started surprisingly strong. The reward was already around0.6to0.8. That told me the model was not starting from zero at all. Qwen2.5 1.5B Instruct already knows a fair bit of GSM8K level math from its own instruction tuning, so the baseline was already decent. Also, reward standard deviation was nonzero in the beginning, which meant different rollouts were getting different scores. GRPO still had useful signal to learn from.Then in phase 2, roughly steps

200to500, reward started drifting down. The run was still moving, but it was not moving in a healthy direction. Then phase 3, roughly steps500to700, is where it got really interesting. Reward crashed to around0.1to0.2.frac_reward_zero_stdshot up toward0.9.train/num_tokenskept going up monotonically. That combination is what made me realize the failure mode was not simply "the model is bad at math." The model was generating longer and longer responses, but reward was getting worse. That is a classic clue that something in the reward setup is going wrong.What Actually Went Wrong

My current reading of the run is that three things went wrong, and all three were design problems, not bugs. First, the reward signal was too sparse. In v1, if the model got the math wrong, reward was just

0.0, full stop. That means GRPO could not distinguish between "wrong but formatted properly" and "completely broken output." Both collapsed to the same score.Second, the model found a way to escape the reward parser by changing its output style. As the run went on,

num_tokenskept growing. The model was getting more verbose, and my parser that was trying to extract the final number from the tail of the response could no longer keep up reliably with that change in style. Third, my system prompt was pushing a1.5Bnon thinking model to reason carefully and at length, which likely encouraged longer outputs even more.So the more accurate meme here is not "the model could not reward hack."

It is this:

Because honestly, the run started at a reward range that already suggests the model had real capability on these problems. The reward fell later not necessarily because the model became dumb at math, but because the reward parser and the output style drifted out of sync.

Why The Cancelled Run Was Not The Main Problem

The container being cancelled at about

56%completion sounds dramatic, but I do not think that is the core issue. By the time the credits ran out, reward had already fallen badly,frac_reward_zero_stdwas already near collapse territory, and the useful gradient was already fading. So yes, the run got cut off. But if I am being honest, I do not think the extra time would have saved this version of the setup. The useful learning had already slowed down a lot.What I Want To Change In V2

For v2, I want to move to a two reward setup. Reward 1 will be format reward. Did the response end with

ANSWER: {number}? If yes, give a small reward like0.1or0.2. Reward 2 will be correctness reward. Did the number inANSWER: {number}match the gold answer? If yes, give1.0. So total reward becomes:This is better because even if the model gets the math wrong, it can still get partial credit for staying inside the output format. That means the reward signal stays informative for longer. Instead of everything collapsing to zero, GRPO can still tell the difference between:

And that is the main point of reward shaping here. I am not changing what correctness means. I am just giving the model intermediate signals so the gradient does not die so easily.

What This Project Taught Me

This was my first ever RL experiment while studying RL, and it was honestly the perfect kind of failure. It was not a boring failure where nothing happens. It was a failure that told me something real about the setup.

It told me:

I also think this experiment made one thing clear to me. In SFT, a bad formatting decision can hurt you. In RL, a weak reward function can quietly destroy the whole point of the run. And I guess that is why people in this field obsess so much over reward design.

Links

Experiments

Infra And Libraries

Datasets